lSSN 1003-6059

CN 34-1089/TP

CODEN MRZHET

CN 34-1089/TP

CODEN MRZHET

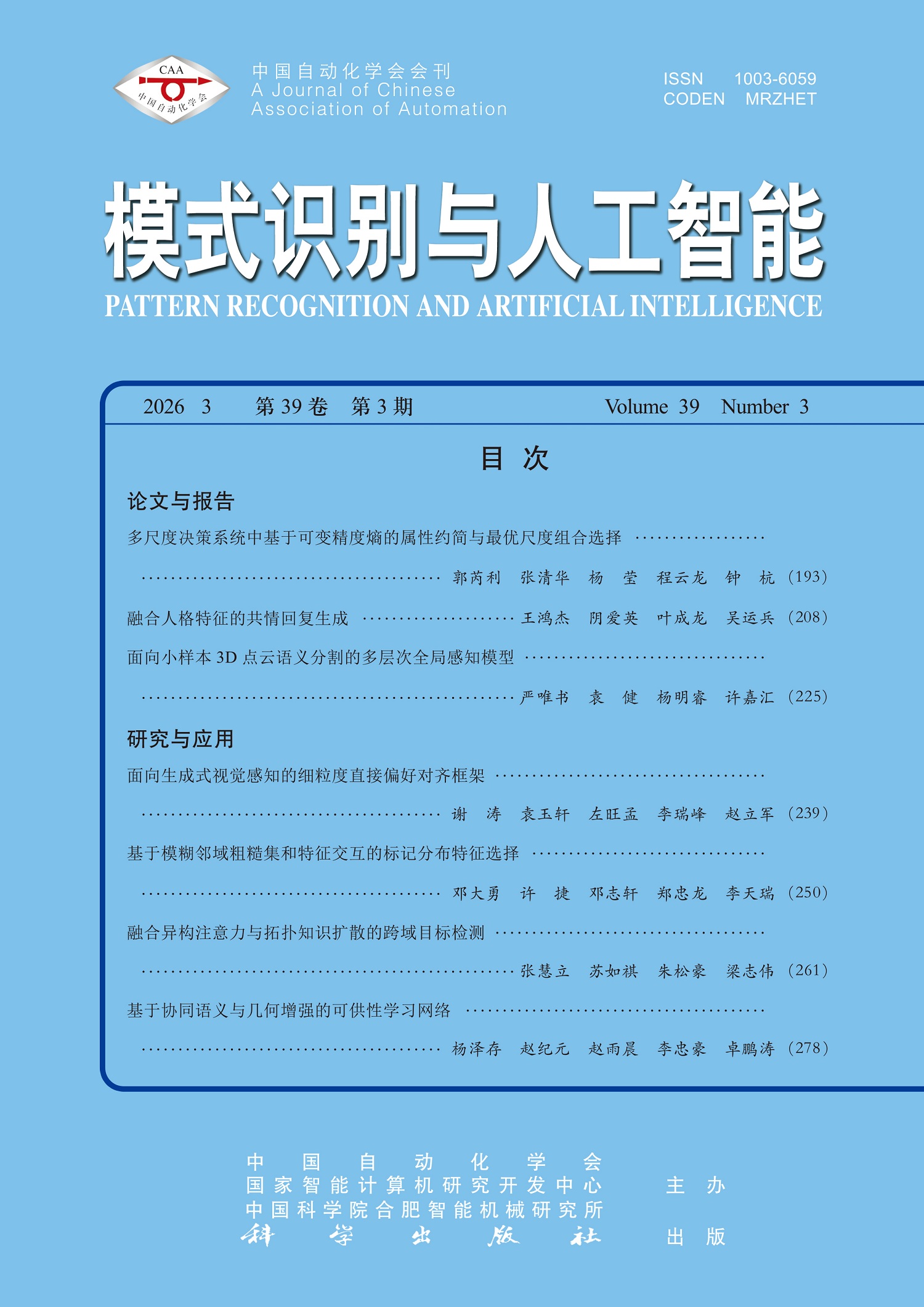

Most existing multi-scale decision systems are built on Pawlak rough sets and their tolerance to noisy data is limited. Although variable precision is introduced to enhance the adaptability in uncertain environments, current methods typically perform scale selection before attribute reduction. Thus, it is difficult to fully leverage attribute reduction in reducing computational complexity. Though information entropy is applied to multi-scale data analysis, its capability to characterize uncertainty relationships within variable precision multi-scale rough set models still needs to be improved. To address these issues, an attribute reduction and optimal scale combination selection method based on variable precision complementary conditional entropy is proposed. First, a monotonic variable precision complementary conditional entropy is presented to characterize the uncertainty relationships between condition attributes and decision attributes under arbitrary scale combinations. Based on this entropy, a consistency-based attribute reduction method is developed. Redundant attributes are effectively eliminated while decision information is preserved. Second, an optimal attribute reduction method based on classification performance is proposed to enhance the effectiveness of the reduction results in classification tasks. On this basis, an optimal scale combination selection algorithm is further designed by incorporating the obtained reduced attribute set to effectively reduce the scale search space. The experiments on UCI datasets demonstrate the effectiveness of the proposed method in terms of classification performance and robustness.

Empathetic response generation is aimed at understanding user emotions and generating appropriate responses. The influence of personality traits on empathetic expression is seldom considered in existing methods. As a result, generated responses are likely to be limited in single style and insufficient personality. To address this problem, a personality-fused empathetic response generation model(PFEG) is proposed in this paper. First, a personality-enhanced encoding module is designed to effectively utilize personality information. The personality traits of both interlocutors are obtained and independently adapted and stylized. Then, an iterative reasoning and personality modulation module is constructed to deepen situational understanding. The influence intensity of personality in the current situation is dynamically calculated according to the personality traits of both interlocutors. The emotional tendency and language style of the response are adjusted accordingly. An emotion prediction module is introduced to accurately perceive the potential emotions of the user. Finally, in the personalized gated decoding module, situation, emotion, knowledge, and personality information are effectively fused through a gated integration mechanism. Responses that conform to individual traits and demonstrate deep empathy are generated. Experiments on public datasets show that PFEG outperforms baseline models on multiple metrics.

Few-shot point cloud semantic segmentation struggles to handle complex structures, ambiguous semantics and noise interference due to its limitations in global context modeling, feature alignment and semantic guidance. To address these issues, a multi-level global-aware model for few-shot 3D point cloud semantic segmentation(MGAM) is proposed. On the basis of multi-level window partitioning, the receptive field is progressively expanded to achieve collaborative modeling of local geometry and global semantics. A dual-domain attention fusion module(DAFM) is designed. Channel attention and point-wise attention are integrated to fuse local and global information. A global auxiliary point mechanism(GAPM) is constructed. Learnable global points are embedded into key layers to enhance cross-layer feature propagation. A global category-aware loss(GCAL) is developed. Category distribution constraints are added to point-level supervision to highlight small-class targets. Ablation experiments verify the effectiveness and complementarity of each module. Comparative experiments show superior performance of MGAM, particularly in complex scenarios.

Generative referring segmentation methods based on multimodal large language model(MLLM) are limited by the mechanism of Supervised Fine-Tuning and lack in-depth exploration of ways to improve generation quality. Therefore, these methods are faced with the challenges of semantic localization bias and rough mask boundaries in complex scenarios. To address these issues, a fine-grained direct preference alignment framework for generative visual perception(FG-DPA) is proposed. The direct preference optimization(DPO) algorithm is transferred from text understanding to the pixel-level segmentation task. High-quality and low-quality mask preference pairs are constructed to guide the method toward learning more accurate visual representations within the latent space. Two types of negative samples are produced by leveraging the interactive characteristics of the segment anything model(SAM). To address the issue of imprecise edges, adversarial point prompts are introduced into the ground-truth bounding box to generate low-quality masks with local omissions or overflows as negative examples. To solve the problem of incorrect target localization, non-overlapping masks are randomly sampled in the background region to construct semantic-level negative examples. Through training with multiple samples, accurate segmentation is finally achieved in conjunction with SAM. Experiments on multiple public datasets show that FG-DPA effectively suppresses localization hallucination and significantly improves the completeness and edge accuracy of mask generation, validating its effectiveness in enhancing multimodal generative visual perception performance.

Label distribution learning(LDL) is widely applied to handle label ambiguity. However,most algorithms are difficult to extract sufficient information from feature interactions. To address this issue, a method of feature selection for label distribution learning based on fuzzy neighborhood rough set and feature interaction(FNRI) is proposed to extract more interaction information from feature interactions. Firstly, a fuzzy dependency relation is introduced to measure the correlation between features and labels. The correlation among features is redefined, and a fuzzy neighborhood entropy is defined to quantify the interaction information between features. Secondly, a feature interaction evaluation index(FIE) based on feature interaction information is constructed. FIE is combined with a dynamic weighting function to calculate the importance of features. Experiments on 14 real-world datasets of LDL demonstrate the superior performance of FNRI.

To address the challenges of low detection accuracy, strong background interference, and insufficient hard sample mining in complex cross-domain scenarios such as UAV aerial photography, low-light conditions, and foggy weather, a cross-domain object detection method integrating heterogeneous attention and topological knowledge diffusion(HATKD) is proposed. The method is synergistically optimized through three core modules. First, a dynamic fusion feature enhancement(DFFE) module is designed. A dual-path heterogeneous attention mechanism is employed to capture multi-granularity spatial information and multi-granularity channel information, thereby filtering highly transferable features and suppressing background noise. Second, a category-aware topological knowledge diffusion module is designed to construct a global topological structure matrix. Hamiltonian graph theory is introduced to build a category prototype memory bank. Cross-domain semantic relationships are aligned through intra-class compactness and inter-class separability constraints. Finally, a spatial-aware hard sample mining(SAHSM) module is designed to optimize the weights of hard samples through confidence-geometry-feature three-level filtering. Thus, the foreground-background imbalance problem is alleviated and the detection capability for hard samples is improved. Experimental results on four datasets demonstrate the superior performance of the proposed method, particularly in small object detection and large object detection. The feature focusing ability of the proposed method is further confirmed through visual heatmaps. Moreover, ablation experiments validate the necessity of each module.

Open vocabulary 3D affordance detection is regarded as a critical link connecting high-level semantic understanding and low-level robotic manipulation to precisely localize object functional regions in unstructured environments. However, existing methods mostly rely on frozen pre-trained vision-language models for shallow feature matching. The generalization ability of these models is insufficient due to the dual challenges of semantic ambiguity in text instructions and geometry-semantic misalignment in feature space. To address these issues, a synergistic semantic and geometric enhancement based affordance learning network(SSGE-Net) is proposed in this paper. First, a physics-aware semantic enhancement module is constructed to generate structured triplets of geometric constraints, functional descriptions and interaction logic. Semantic densification is achieved with these triplets. Thus, the lack of instruction information is compensated for. Second, a multi-scale geometry refinement mechanism is designed. Complementary topological details are captured by utilizing local dynamic graph convolution and global self-attention mechanisms to enhance feature discriminability. Finally, a deep cross-modal alignment mechanism based on Transformer decoders is proposed. Point cloud features are dynamically reconstructed by cross-attention under semantic guidance to achieve precise anchoring. Extensive experiments on 3D AffordanceNet dataset demonstrate that SSGE-Net achieves consistent performance improvements under both full-view and partial-view settings. These results validate its superiority and robustness in complex viewpoints and long-tail category scenarios.